By 2026, the conversation around AI will shift from “Should we use it?” to “How do we use it responsibly and at scale?”

Agentic AI is at the center of that shift. Unlike traditional automation, these systems don’t just follow rules—they support decisions, adapt to context, and help teams move faster. But the real opportunity isn’t in the technology itself. It’s in how organisations apply it to solve real problems.

Start with the problem, not the tool

One of the biggest barriers to adoption today isn’t capability—it’s clarity.

With so many tools, vendors, and promises in the market, many organisations struggle with a simple question: Where do we start?

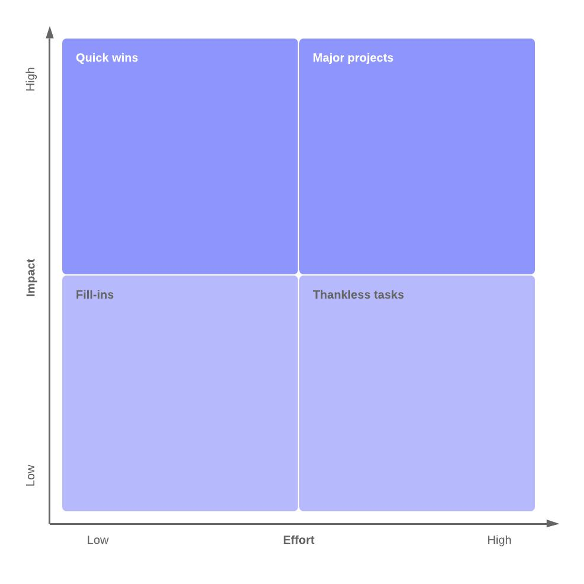

At Mondia, the approach has been practical. Instead of chasing trends, we focused on identifying real operational pain points and prioritising them based on impact and effort. That meant starting small—testing embedded AI features and targeted use cases—before moving into more advanced applications.

The lesson is simple: AI adoption works best when it’s grounded in real business needs, not experimentation for its own sake.

And when that clarity is in place, the value of AI becomes much easier to see in practice.

Where AI is already making a difference

The most immediate value often comes from improving how teams work day-to-day.

We’re already seeing strong impact in areas like:

- AI-assisted coding, improving both speed and quality of output

- Internal knowledge hubs, helping employees quickly access company know-how

- Automation of monitoring, reporting, testing

- General tasks like text/meeting summarisation, presentations, ideation and analysis

These aren’t futuristic use cases, they’re practical applications that reduce friction and improve efficiency today.

But as these use cases expand, a new question naturally follows: how much autonomy should AI actually have?

Autonomy needs boundaries

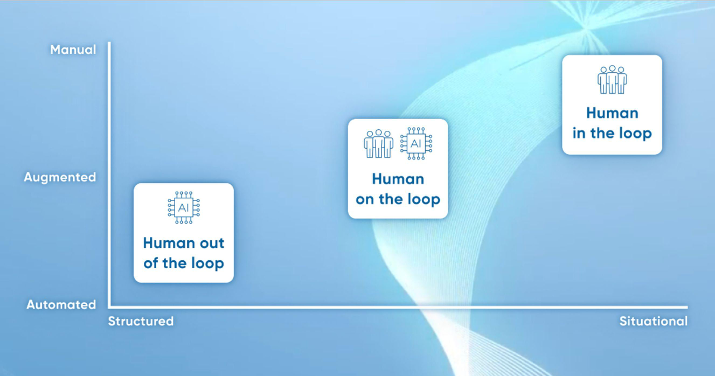

As AI becomes more capable, the conversation naturally shifts to autonomy. But more autonomy doesn’t always mean more value.

Autonomy in Agentic AI introduces risks that are inherently more dynamic than those seen in traditional systems. This is largely driven by uncertainty and user unfamiliarity, particularly as new users shift toward systems that depend on clearly defined parameters to function effectively. As a result, risks can emerge in interconnected ways, like unintended data exposure as AI agents interact across multiple systems, actions that exceed their intended permissions, and AI agents being misled by bad inputs in connected systems.

Achieving value therefore depends on one critical principle: predictable autonomy.

That means clearly defining:

- What an AI system can decide

- What it can recommend

- And where human approval is still required

At Mondia, this is supported by built-in checks, feedback loops, and clear accountability. By establishing measurement processes to track productivity, quality, and scalability, and organizing distinct separations between different access layers, AI can assist and accelerate. However, the responsibility always stays with people to moderate and identify. This balance is essential—not just for control, but for trust.

And once autonomy is defined, the next challenge is ensuring it operates safely at scale.

Security and governance can’t be an afterthought

Agentic AI introduces a new kind of risk. It’s not just about protecting systems—it’s about managing how AI behaves across them. To address these risks, organizations need a methodology like Secure-by-Design, which embeds security, resilience, and accountability into AI systems from the earliest stages.

Practically, this means:

- Controlling data access and permissions: ensuring AI agents have defined boundaries and kill-switches.

- Monitoring AI behavior, not just outputs: mandatory auditability of how agents act across systems.

- Designing systems with failure in mind: anticipating errors, misclassifications, or misuse rather than reacting afterward.

If organizations cannot clearly define what an AI system is allowed to do, it isn’t ready to scale.

However, even the most robust systems won’t succeed without one critical element: people.

AI adoption is a people challenge

Technology is only part of the equation.

Successful adoption depends on how teams understand and use AI in their daily work. For most employees, AI doesn’t appear as a “system”—it shows up inside tools they already use, supporting tasks and decisions. By positioning AI as an assistive collaborator that reduces friction and handles repetitive tasks, organizations can engage both technical and non-technical teams, helping everyone engage and focus on higher-value work.

That’s why training, clarity, and gradual adoption matter. Without guardrails and proper onboarding, organisations risk higher costs, resistance to adoption, inconsistent output, and long-term inefficiencies.

With the right foundations in place – strategy, governance, and people – the focus can then shift to long-term impact.

Looking ahead

The opportunity for agentic AI is clear—especially in industries like telco, gaming, and digital services. From personalised user journeys and smarter recommendations to faster product delivery and operational efficiency, the use cases are growing quickly.

At Mondia, we see this as a natural extension of how we already create value—connecting digital services to large-scale audiences, optimising user experiences, and enabling partners to scale efficiently. As AI continues to evolve, it will play a bigger role in how we design products, power marketplaces, and deliver more personalised, data-driven experiences across our ecosystem.

The Takeaway

Agentic AI isn’t about replacing people or chasing the latest trend.

It’s about building systems that help organisations operate better—faster, smarter, and with more control.

The companies that succeed in 2026 won’t be the ones that adopted AI first. They’ll be the ones that adopted it right.

Sources:

- Deloitte. How can tech leaders manage emerging generative AI risks today while keeping the future in mind? (2025).

- Deloitte. Four data and model quality challenges tied to generative AI. (2025).

- Harvard Business Review. Managing the risks of generative AI. (2023).

- CyberRisk Alliance. 4 key takeaways from NIST’s new guide on AI cyber threats. (2024).

- CIO. Generative AI Hallucations: What Can It Do? (2023).

- Forbes. Becoming A Frontier Firm: How Agentic AI Is Redefining The Intelligent Enterprise. (2025).

- Unite.AI. What Enterprises Are Getting Wrong About Agentic AI. (2025).

About the Authors

Khaleel Kilani, Chief Operating Officer & Executive Director at Mondia

Khaleel leads operations at Mondia, focusing on scalability, efficiency, and turning innovation into measurable business outcomes. His approach to AI adoption is rooted in practical execution – prioritising real use cases, structured experimentation, and sustainable implementation across the organisation.

Thomas Langer, Chief Information Security & Data Officer at Mondia

Thomas oversees Mondia’s digital transformation and security strategy, ensuring innovation is built on a foundation of trust, governance, and resilience. His perspective on agentic AI centres on balancing autonomy with control embedding security, transparency, and accountability into every stage of adoption. “Security builds trust. Data drives progress. My goal is to make both work together — so that everyone at Mondia can move fast, with confidence.”